-

Notifications

You must be signed in to change notification settings - Fork 60

ENH Added evaluation #69

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Conversation

|

@merveenoyan Did you ping us because we should already take a look or just to let us know? |

|

@BenjaminBossan I pinged you to mainly look at UX of the evaluation and let me know if the direction is right. |

|

Hmm, not so much I can say about this yet. It is very specific to HF, right? E.g. IIUC we assume that the |

|

@BenjaminBossan having metrics in metadata is essentially for HF Hub, it also passes them to paperswithcode to associated task on associated dataset. (let's say you have text-classification, you'd like to evaluate it on GLUE) this is not the case usually for tabular models imo as people split their data on their own. I don't expect heavy usage for this for when you put your model for outside world, if someone else wants to benchmark their own models and programmatically access the best metrics, it would be good IMHO. |

|

Overall I'd say it's in the right direction. I would change it a bit so that the method accepts the results of a scorer instead of trying to get the name and call it itself. It can be simplified by accepting a float value and a metric name. We probably also want to have it more like: instead of a list of (data description, metric specs) as is right now. But it seems like the eval results can't support that? |

|

@adrinjalali with #71 I think we can let the user add |

|

Yeah that would also make sense. I'm impartial on whether to construct |

|

I would tend towards not exposing modelcards internals to the user. |

|

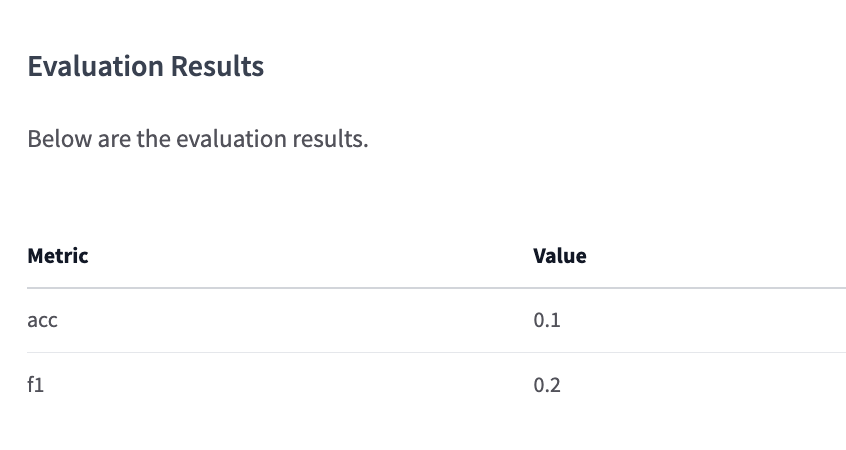

BTW evaluation metrics can be seen in this repository: |

skops/card/_model_card.py

Outdated

| self : object | ||

| Card object. | ||

| """ | ||

| self._eval_results = tabulate( |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

should we add to a table or set the table? The current code doesn't allow the user to call this method multiple times.

Co-authored-by: Adrin Jalali <adrin.jalali@gmail.com>

Co-authored-by: Adrin Jalali <adrin.jalali@gmail.com>

Co-authored-by: Adrin Jalali <adrin.jalali@gmail.com>

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Thanks @merveenoyan

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

This is almost good to go from my point of view. I have a suggestion for better formatting of one of the examples, which you may or may not agree with. But I think the test needs to be changed, as I think it doesn't work as intended.

Co-authored-by: Benjamin Bossan <BenjaminBossan@users.noreply.github.com>

Co-authored-by: Adrin Jalali <adrin.jalali@gmail.com>

Co-authored-by: Adrin Jalali <adrin.jalali@gmail.com>

|

@adrinjalali I addressed your comments. |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Otherwise LGTM.

Co-authored-by: Adrin Jalali <adrin.jalali@gmail.com>

|

@BenjaminBossan can you review? |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Perfect, thanks

This PR is actually a fragment of #26 as #26 is a bit overwhelming. I changed the API and put evaluation a part of the Card class not to bother user with extra attributes.

@BenjaminBossan @adrinjalali